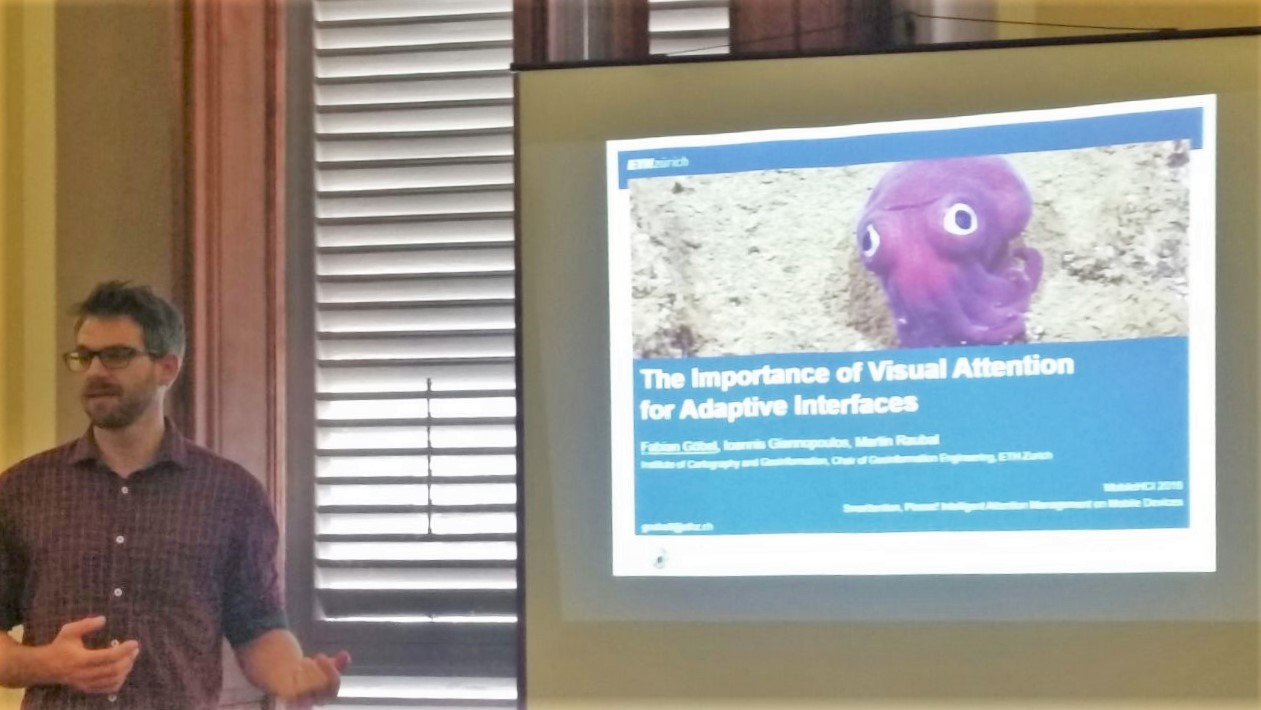

Our article “Gaze-Informed Location Based Services” has been accepted for publication by the International Journal of Geographical Information Science (IJGIS):

Anagnostopoulos, V.-A., Havlena, M., Kiefer, P., Giannopoulos, I., Schindler, K., and Raubal, M. (2017). Gaze-informed location based services. International Journal of Geographical Information Science, 2017. (accepted), PDF

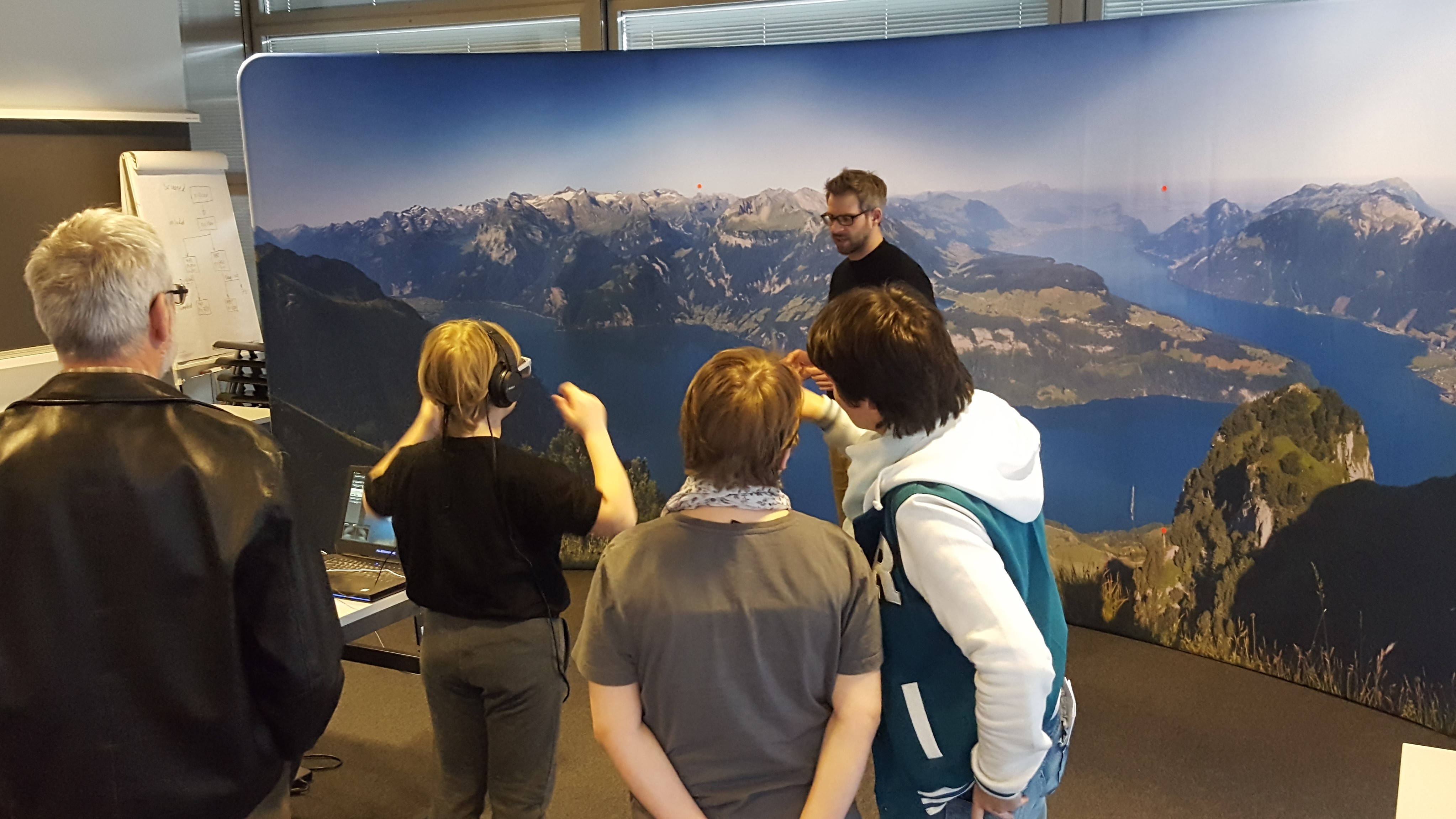

The article introduces the concept of location based services which take the user’s viewing direction into account. It reports on the implementation and evaluation of such gaze-informed location based service which has been developed as part of the LAMETTA project. This research has been performed in collaboration between the GeoGazeLab, Michal Havlena (Computer Vision Laboratory, ETH Zurich) and Konrad Schindler (Institute of Geodesy and Photogrammetry, ETH Zurich).

Abstract

Location-Based Services (LBS) provide more useful, intelligent assistance to users by adapting to their geographic context. For some services that context goes beyond a location and includes further spatial parameters, such as the user’s orientation or field of view. Here, we introduce Gaze-Informed LBS (GAIN-LBS), a novel type of LBS that takes into account the user’s viewing direction. Such a system could, for instance, provide audio information about the specific building a tourist is looking at from a vantage point. To determine the viewing direction relative to the environment, we record the gaze direction

relative to the user’s head with a mobile eye tracker. Image data from the tracker’s forward-looking camera serve as input to determine the orientation of the head w.r.t. the surrounding scene, using computer vision methods that allow one to estimate the relative transformation between the camera and a known view of the scene in real-time and without the need for artificial markers or additional sensors. We focus on how to map the Point of Regard of a user to a reference system, for which the objects of interest are known in advance. In an experimental validation on three real city panoramas, we confirm that the approach can cope with head movements of varying speed, including fast rotations up to 63 deg/s. We further demonstrate the feasibility of GAIN-LBS for tourist assistance with a proof-of-concept experiment in which a tourist explores a city panorama, where the approach achieved a recall that reaches over 99%. Finally, a GAIN-LBS can provide objective and qualitative ways of examining the gaze of a user based on what the user is currently looking at.